The Problem

The Client

Open Context is an open digital repository of cultural heritage and archaeology data maintained by a 501©3 nonprofit organization, Alexandria Archive Institute. The platform has published 150 projects, representing 2 million items contributed by more than 1,000 data authors. Open Context provides two main services: publishing and exploring services.

As archiving is an important part of data preservation and access, our clients posed the concern that a focus on archiving alone meant that data would often be poorly described, hampering user understanding and data reuse. Additionally, for privacy purposes, they did not track users and thus knew very little about how users interacted with the platform.

Our 4 member group, the Emphasis Lab, was tasked with the role of investigating Open Context’s user experience and provide corresponding findings and recommendations that could be implemented for future versions of the platform.

Project Duration: January 2022 - May 2022

research objectives

Understand the motivations of Open Context’s primary user groups in accessing archaeological data and use cases of applying the data.

Determine if the users accessing Open Context are able to find the information they seek in a format valuable to their needs.

Identify areas in which the user experience may be improved and provide concrete recommendations for future development.

methodology

System Analysis

Market Research

Assess Usability

System Analysis

interaction mapping

We visually mapped the Open Context site to understand site components and how they work with each other.

Explore Open Context’s information architecture and how pages interlink to internally and externally

Become familiarized with the system’s functions, features, and hierarchal organization structures

Findings + REcommendations

user interviews

We conducted semi-structured interviews to understand needs of Open Context’s core user bases.

There were x6 interviews completed in total at 60 minutes each. We had x2 participants per each category listed:

Current Open Context users

Open Context team members

Archeological researchers, educators, and enthusiasts that may be the potential users for Open Context

Interview transcripts were analyzed via thematic analysis. Each interview session was reviewed by at least two team members, extracting and annotating meaningful information into affinity notes on a virtual whiteboard tool, Miro. Notes in similar themes were clustered and formatted into an affinity wall.

Findings + Recommendations

-

Although Open Context’s core product is Database Archive and Search, the search function is surprisingly difficult to find, where users must navigate to a specific dropdown menu from primary navigation.

Recommendation: Represent the Search bar on the Home Page, similar to the Google home page and other database competitors.

-

To new users, the differences in Search types (Projects, Media, Data Records, Everything) is not immediately clear, since each Search type includes similar functions and advanced filters.

Recommendation: In future studies, seek to understand user needs in order to inform which types of search to highlight in navigation. For example, are users looking to search by region? What does “data record” mean to them?

-

In our interviews, we found that most of the participants had concerns about ambiguity in database search results. Along with enormous data resources, there is data noise and cluttered data management for users.

Recommendation: The implementation of a guide that conveys best practices for a calculated search and search logic could be advantageous to Open Context; it could be embedded into the search tool or seen as a separate function, aiding users who are less experienced with Open Context’s search algorithm.

-

The function of translating database information into a usable, readable format is a top concern amongst all types of users. Currently, most databases do not fully satisfy this need, resulting in the manual maintenance of pdfs, articles, and papers. A large portion of data sits in the form of Excel spreadsheets.

Recommendation: We suggest drilling into the Open Context export function and exploring its capabilities. There could also be methods for users to customize data output/input, either through system-generated templates or a written how-to manual. Similarly to previous recommendations, supplying documentation on report customization could also be beneficial.

-

Despite Open Context’s data storage and refined mapping procedures, database accessibility can be a problem according to interviewees. Users’ preference is largely due to what's easily accessible, most familiar and most relevant to their area of research. Developing partnerships to expand adoption can be more challenging than the technical aspect of creating online infrastructure.

Recommendation: The team should continue to develop partnerships with researchers, government agencies, academic institutions, and libraries to spotlight Open Context as a comprehensive and universal database accessible to researchers and the general public. In addition, Open Context should continue to be advertised as a useful tool for educational purposes for curriculum design in data analysis and visualization.

-

The interviews indicated two justifications for why some data is kept private, or at very least, approved for access. The first use case is in the sharing of “sensitive data,” such as material from a disadvantaged community or restricted information held through governmental bodies. The second use case includes data produced in early-stage projects where the material is not ready for public consumption.

Recommendation: Open Context should consider ways to temper open access to materials with ethical obligations to protect and materials not ready for open consumption.

Affinity wall with thematic groupings of interview findings and quotes (interviewees identified by color-coded notes)

Market Research

Comparative Evaluation

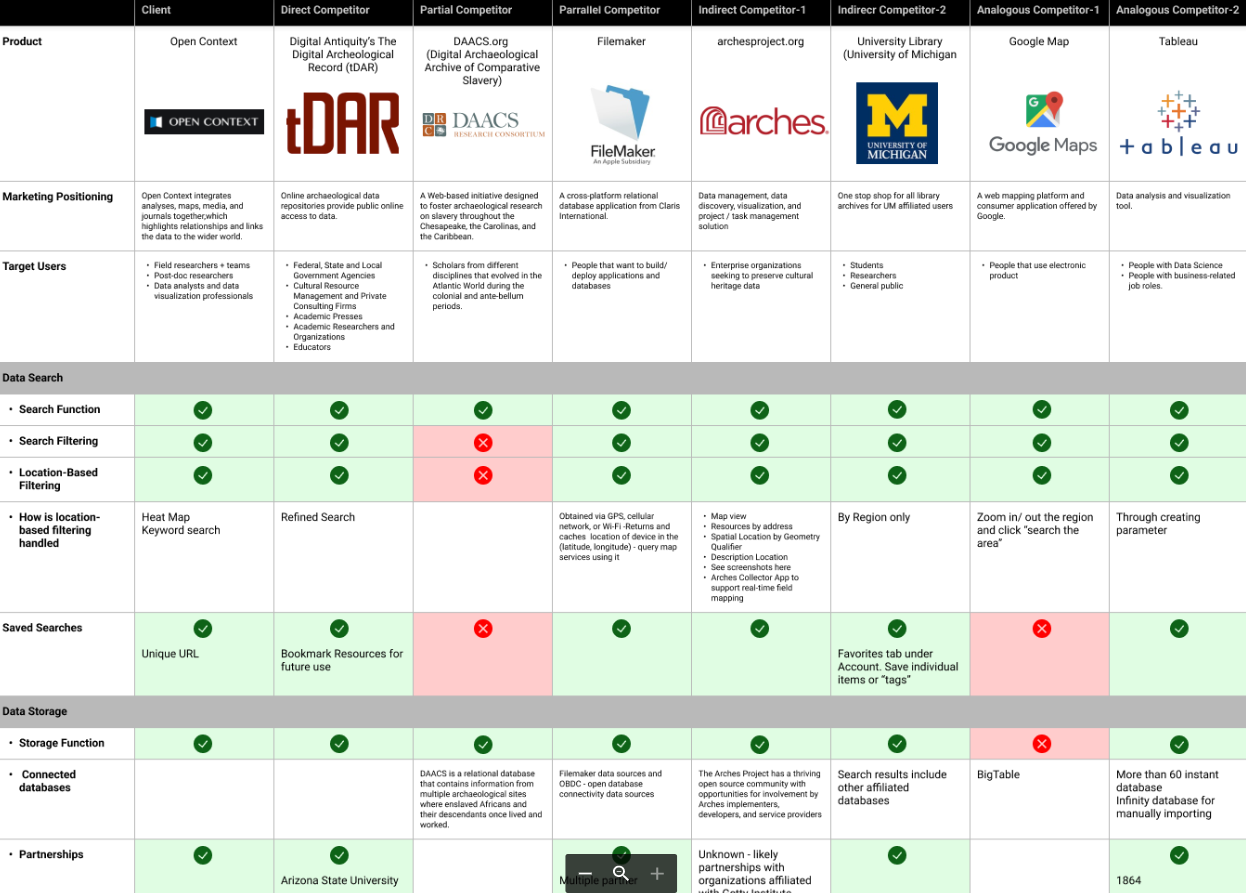

We compared Open Context’s system, positioning and product against competitive systems and other systems with tangential similarity.

To identify and evaluate the key usability strengths and weaknesses of Open Context’s online system competitors, we first determined its appropriate competitors. As the system that we were working with was the digital archaeological repositories, we chose to focus on Web platforms for preservation and public online access to archaeological research data. Therefore, based on Newman's taxonomy, we selected x7 competitors with 5 types of comparisons in the archeological archives space.

Using the findings from our interviews analysis, we created a matrix of 20+ features and tools and analyzed each competitor against data search, storage, and export. Our final comparison primarily focused on:

Marketing positioning

Partnerships

System accessibility

Other common functions

findings + recommendations

surveys

We conducted quantitative analysis of user needs against a broader sample of participants meeting Open Context’s core user base.

To gain insights to improve database exploration and search function of Open Context, we decided to target current and potential users:

All Open Context users

Archeological researchers, educators, and enthusiasts that may be the potential users

Based on project constraints, the team aspired to receive a minimum of 50 responses as a target sample size. To effectively gather information from the target audience, our team used a stratified sample method to randomly select people from both of our target respondent populations. The survey questionnaire was drafted, reviewed by the client, then piloted against 6 people (Emphasis Lab and client contacts). The final survey was designed in Qualtrics.

To recruit survey participants, the team relied on the client to distribute the survey by posting survey links on Open Context’s Twitter and sharing via email distribution lists. Through this, the client estimated that the survey reached out to a few thousand potential participants, of whom 108 people opened the survey, 76 people answered at least one question and 56 people completed the survey, with a completion rate of 74%. In the responses, 54% of respondents had experience using Open Context. Among people who had not used Open Context, 92% of them described them as Archeological related practitioners. In general, 96% of respondents were within our target population.

Findings + recommendations

-

We found that searching and browsing data remains one of Open Context’s most unique features. However, Open Context has areas for opportunity in visibility and display of these core functions. For instance, the implicit entry point to the site's search function may leave users searching for the search function. When reviewing its competitors, tDAR highlights the search function and enhances usability by placing the search bar in the top-center of the home page.

Recommendation:

• Representing the Search Bar in the center of the homepage makes Search more visible and easy to access.

• The types of search types filters highlighted may be reconsidered, such as Search by Region, Search by Object types, Search by File Access, etc.

• Adherence to common design patterns improves intuitive product use, and stratifying varying levels of granularity makes the product more accessible to users of varying levels of archaeology and system knowledge.

-

All of Open Context’s competitors apart from DAACS and Google Maps allow users (logged into their accounts) to save their searches and view past searches and results from their browsing history. Currently, Open Context allows for saved searches through the supplement of a unique URL for individual searches; however, there is no interface functionality (i.e. a “save” button that stores the search to a linked account) present.

Recommendation: Offering the option to create a user profile could be beneficial to recurring Open Context users. As part of the New Account onboarding process, users can elect to customize their data search process before active use. If this is implemented, it is suggested that users login to save and export data; however, it should be maintained that the data is still free and open to them. As the user logs into their account, functions related to user history and preferences (saving searches, viewing search history, filtering/viewing previous searches and datasets) will become available.

-

Of the industrial competitors that we researched, only FileMaker and Tableau support data report customization. FileMaker allows users to change the export order of the attributes and include data from related fields. This makes data export more flexible but comes with a steeper learning curve. Tableau, the industry leading data analysis and visualization tool, allows users to clean, filter and manipulate data before exporting.

Recommendation(s):

• To lower the barrier for non-technical users, user-friendly elements could be added to the data export window. For example, “drag and drop” may be more intuitive for a user to manipulate attributes in a data report.

• Multiple graphic templates could be provided, allowing users to visualize different properties of the data on the exported charts.

• With the advanced options of data visualization, a preview of graphs should be provided as a feedback for users to identify whether search results match their expectations.

-

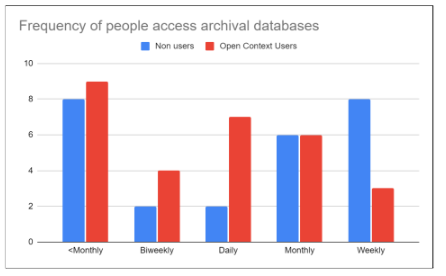

Individuals who have had experience with Open Context reported higher frequencies of archival database use. A positive correlation was discovered between the frequency of database use and users’ feelings about their experience working with archaeological archives/databases. Additionally, surveys indicated twice as many Open Context users versus non-users had experience storing/sharing data through an online database repository.

Recommendation: We recommend catering to the user experience to both new and recurring users by establishing a guided onboarding experience within Open Context. For first-time users creating an account, they will be met with an onboarding process (either in system or as a written guide) which walks through the features of Open Context and be provided corresponding documentation as to how an open data repository operates.

-

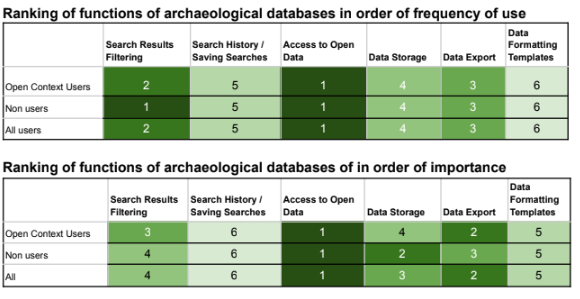

80% of respondents stated that Access to Open Data is the most important function of archaeological databases, and Data Export continues to have high placement in frequency and importance (ranked #2 and #3 respectively). Results Filtering, although placing in the lower half in terms of importance, nonetheless came in as #2 in most frequently used functions. Functions that cater to customized use of the product (such as Data Formatting Templates and Saved Search History), rank lower in importance and are considered nice-to-haves by respondents.

Recommendation: Based on these findings around relative importance and frequency of use, we recommend that Open Context continue prioritizing work focusing on the following areas:

● Expanding the Open Context database

● Improve user experience of:

○ Search Filtering

○ Data Export Experience

-

For the users who reported “Extremely Uncomfortable” or “Somewhat Uncomfortable” regarding open data, their responses could be grouped into the following categories:

1. Data Privacy: Concerns surrounding the protection data, as well as security concerns from databases if the data had to be pulled from other nations/internationally

2. Cultural Sensitivity: Research surrounding particular topics, such as data around ethnic groups or tribes could be sensitive and personal to the groups themselves

3. Ongoing Research - Until the research is fully published, the data should not be accessible to anyone yet

Recommendation: We recommend investing more time in education regarding how Open Context implements open data. Earlier interview participants expressed the sentiment of wanting to know exactly what Open Context was, and how it works. The Open Context site could better market their service by explicitly stating how the data repositories operate while addressing some of the concerns brought up in our findings.

Finding: Open Context users are “expert” users

Finding: Current and future users express similar needs in archaeological database functions

Assess Usability

Heuristic evaluation

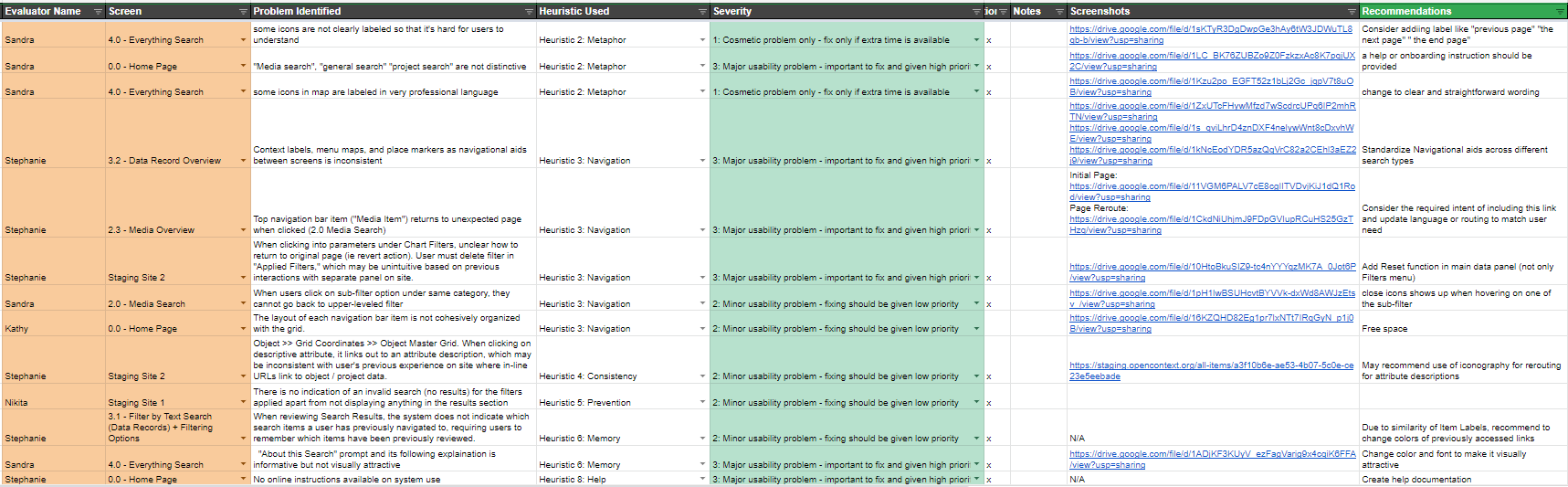

We assessed the Open Context Search product against a checklist of usability best practices.

usability testing

We reviewed users interacting with the Open Context search product to assess their ability to complete tasks and their thought process.

Research Questions

Which areas of the site are the most difficult to navigate and why?

What functions do not perform as users expect?

How do users interact with the Search and Filter tools?

What do users think of the Open Context live site and the staging site?

Methodology

To understand how users interacted with Open Context, a testing plan was established, where participants were asked to complete a series of 5 system tasks over moderated virtual sessions. These task observations were followed by post-task and post-test questionnaires.

Study participants consisted of x5 individuals who had never used Open Context before:

x2 database novices

x2 experienced with databases

x1 who has never used an online database repository to store their work

These participants were recruited from survey respondents who opted into future studies, and directly sourced by the Open Context team. A screening questionnaire was distributed to ascertain fit to established criteria. The screener focused on participant demographic information, their self-identified expertise level with databases, and testing availability. Final participants were identified based on degree of database expertise and demographic information.

The Study

System tasks revolved around the following 5 Open Context functions and were accompanied by written and verbal instruction:

Project Search and locating data records

Advanced filtering process

Narrowing results using the chronological distribution slider

Data export and report customization

Map-based search in both live and staging environments

Findings + Recommendations

Group Heuristic Evaluation

The analysis performed by the Emphasis Lab is based on Nielsen’s 10 Usability Heuristics for User Interface Design. Using this framework, the team audited Open Context interfaces. Four evaluators performed x2 independent assessments to review general interface feedback and consistency across system elements. Using the heuristic checklist, each evaluator produced a list of “problems” ranked against a 0 (Not a usability issue) to 4 (Usability catastrophe) scale. Independent assessment was followed by a team calibration to review problems against frequency and severity, and to decide upon a universal rating for each.

Findings + Recommendations

-

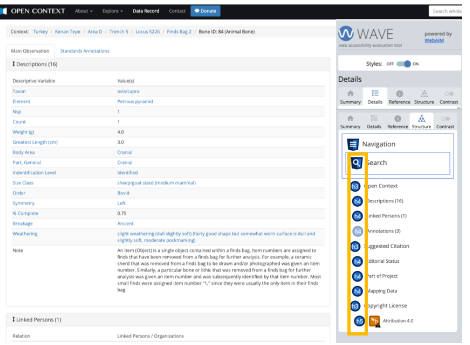

At times, Open Context users may be lacking system status visibility and feedback. For example, some Open Context pages, such as Data Record entries, lack H1 / H2 headers which make it unclear where users are in the site and what type of record they’re reviewing. Furthermore, there are cosmetic problems existing in the system. For example, there are no labels on heatmaps to signify what each shaded box indicates and no "select all" option for applied filters (must be applied individually).

Recommendation: Include headings that reflect the organization of the page. We recommend for all pages to begin with a header referencing the object / record / media being reviewed, with a main heading and a sub-header describing the entry type.

-

The heuristic evaluation found some website iconography misleading, as what users think the icons represent are inconsistent from their actual functions. For example, the “Info” icon is used in Open Context’s Filtering Options. Typically, such an icon is used to open “helper text” in a text bubble. However, this button opens a new page filled with computer jargon and/or additional contents, which may be unexpected to users.

Recommendation: Adhere the system elements to standard conventions that can smooth users’ learning curves and improve user experience. So instead of linking to an external webpage, a text snippet could explain the goal of filter options could be more intuitive.

-

A common framework for web accessibility is the POUR framework. With this in mind, the team audited the site using accessibility evaluation tools and keyboard navigation. Certain elements of the site were inaccessible through keyboard-based navigation. On the main map, all tabs apart from Mapped Results could not be reached using keyboard tabbing. Additionally, certain stylistic choices throughout the system were disadvantageous toward color blindness. This was dominant in data and media records where small blue text is accompanied by gray background.

Recommendation: We recommend considering methods to accompany mouse-based navigation with text entry or non-mouse based navigation. Also, future development could be accompanied by periodic assessments using one of many accessibility tools, including:

● axe Devtools

● WAVE browser extensions

● W3C Markup Validation Service

● Adobe Color Accessibility Tools

Finding: Lack of system feedback. Note the lack of clear visual headers on the page.

Finding: Accessibility and Inclusion. 18 contrast errors were identified on this media record entry page.

-

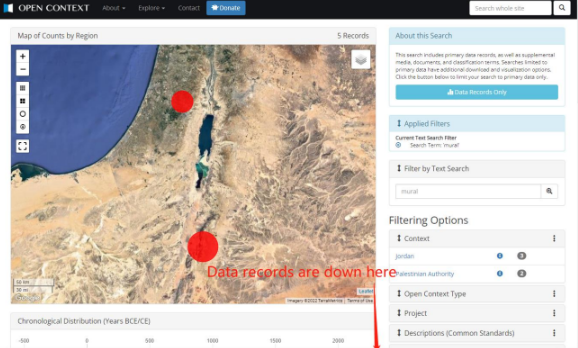

Our usability tests revealed a level of confusion with the filtering mechanism. Two participants focused on keyword search, ignoring the Filtering Options fully or misunderstanding how keyword search translated to menu filters. Overall, 60% of participants ignored the Filtering Options menu bar to some degree throughout Task 2 and 3, and were unaware that top-level filters held nested filters that could be expanded.

Recommendation: We recommend Open Context follow common design patterns for filters. The selections should be of varying levels of granularity yet intuitive and differentiable. All types of users should understand them and know what to expect when they sub-menus or filters are selected. Also, placing the Filtering Options on a static side navigation bar that moves with the user scrolling between the map and data records keeps the filters omnipresent through all site interactions.

-

Accompanying icons and prompts were not always clear. For example, the filter icon on the side of the chronological distribution timeline was inconspicuous and unfamiliar, and when interacting with it, most participants were not able to understand intuitively that the Filter Button would refresh their data set. Many relied on the helper language displayed when hovering over the filter button to understand its function.

Recommendation: We suggest improving consistency by using more commonly recognized iconography throughout the system , or by updating icons to simple text where appropriate (ex. “Apply Filters”) Also, given that some icons are too small to attract attention, we recommend making clickable icons larger. For example, one user did not recognize the download icon in the geolocation map and clicked on it without realizing the outcome. Larger icons help them understand features more easily.

Finding: Filters go unnoticed. This is a screenshot of an initiated search, where users need to scroll down to see the returned data record results.

Finding: Iconography. The left image shows the current download button on the Open Context map where the icon is relatively small. The right image mockup representing a larger and clearer icon.

Reflections

Thanks for viewing!

Areas for consideration

At times, the team relied on personal networks for study participation, which could have generated familiarity bias during tests/interviews. Additionally, due to our time frame (Jan 2022-May 2022), some of the processes and techniques may have been less robust than ideal, or were beyond the project scope. However, all aspects of this investigative process indicated that Open Context remains an invaluable tool to both data authors and data seekers alike and should continue to perform as such.

My takeaways

Do not assume user paths. There were a lot of aspects about Open Context that were only discoverable through the user interviews, surveys, and testing. These provided new insights from various perspectives that would have not rendered from our team’s limited experience within the platform.

There is more to usability than architecture. Findings regarding accessibility, jargon, and iconography proved to be just as important as the actual site structure when it came to evaluating the user experience on a holistic level. I will be sure to stay cognizant of these methods and approaches when gauging usability of products in the future.